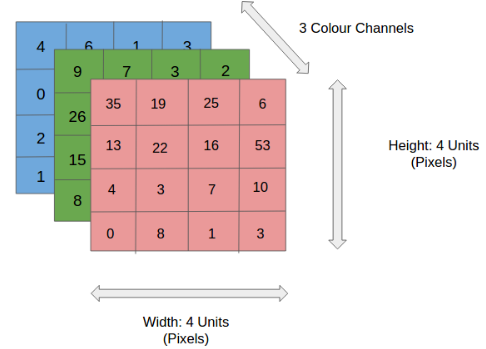

Let's start by unpacking what visual data actually is: Images are matrices!

-

That means that actually we have all the information about what is on the image right there in numbers describing each and every pixel, right? Wrong!

-

The problem with images that the morst important information is not the what we can learn from each pixel but from the spatial interaction between them.

-

Thus, the (relevant) information contained in an image is not the sum of all pixel values.

-

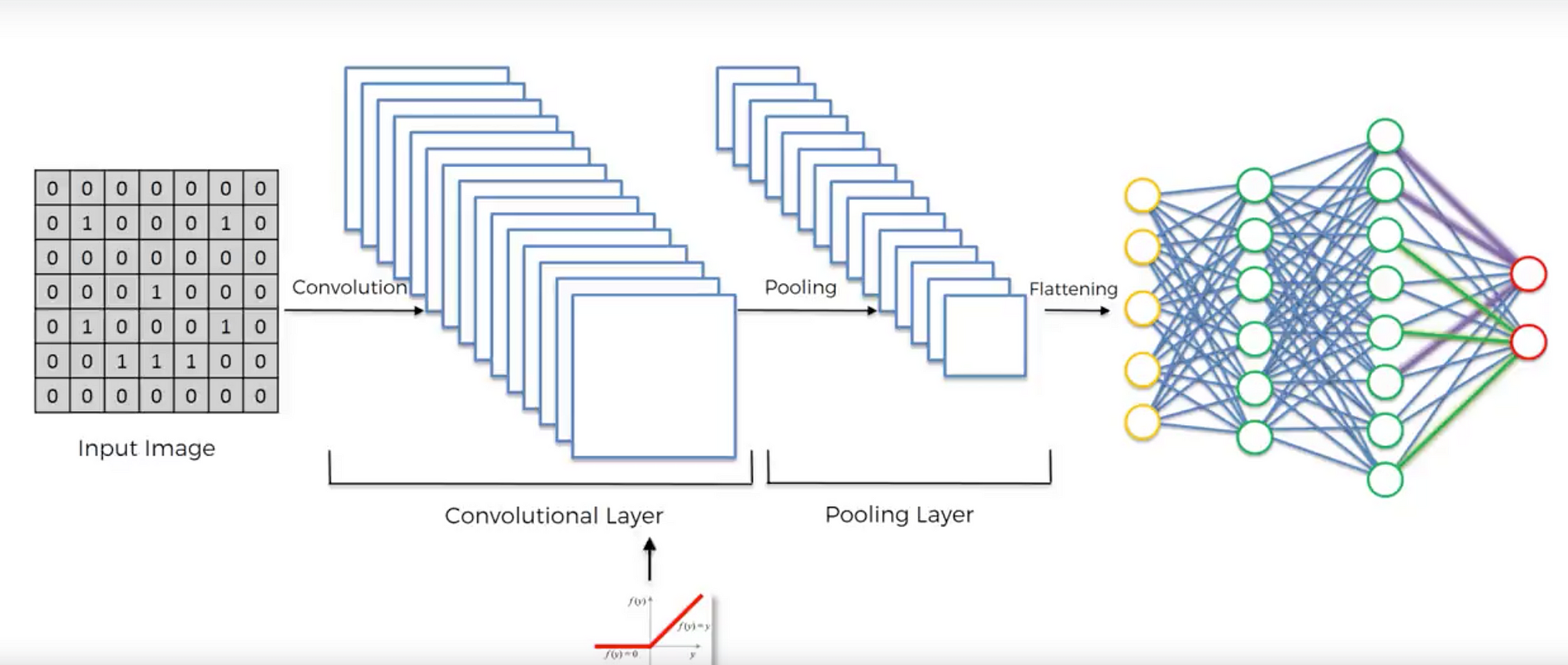

The currently most widely used and extremely well performing approach to computer vision is convolution and more specifically deep convolutional neural networks.

A full CNN architecture

To understand what the relavant features are hidden in the image we need to understand how individual pixels interact – We need to identify patterns.

-

For this we use convolutions. This explanation will be rather simplistic but good enough for our purposes.

-

Now, imagine you make a large banner with a rectangular hole in it and start sliding it over that brick wall - left to right, up to down. Obviously, you will see some very different patterns at different times. Every time you see something interesting, you note it down. That's convolution in a nutshell:

A really great post about CNN from different viewmoints:

- Imagine now, instead of one window, you have many windows with different shapes to detect different features...

Adding MaxPooling

The pooling operation used in convolutional neural networks is a big mistake and the fact that it works so well is a disaster. - Geoffrey Hinton

- Convolution is great. But what if we want to capture information about the same objects but they are in different perspectives?

Max pooling helps us

- preserve features

- achieve spacial invariance

- reduce size and number of parameters (preventing overfitting)

-

Now, let's check out something fun:

-

The final layer is creating the input we need for a neural net - it's a simple flattening operation

-

From here we using an ANN for classification or regression...or whatever you are able to put together...

-

The end.